In the fast-paced environment of Scrum, the gap between what stakeholders imagine and what developers build often leads to costly rework. Ambiguity is the enemy of efficiency. When requirements are vague, the team must guess, and guessing leads to errors. Acceptance Criteria (AC) serve as the definitive contract between the business value and the technical implementation. They are not mere suggestions; they are the boundaries of quality.

Writing effective acceptance criteria requires precision, collaboration, and a deep understanding of the user story. This guide details the mechanics of crafting criteria that clarify expectations, reduce ambiguity, and ensure that every increment delivered is truly valuable. We will explore the structural components of high-quality criteria, the collaborative processes that surround them, and the common pitfalls that undermine the entire Scrum framework.

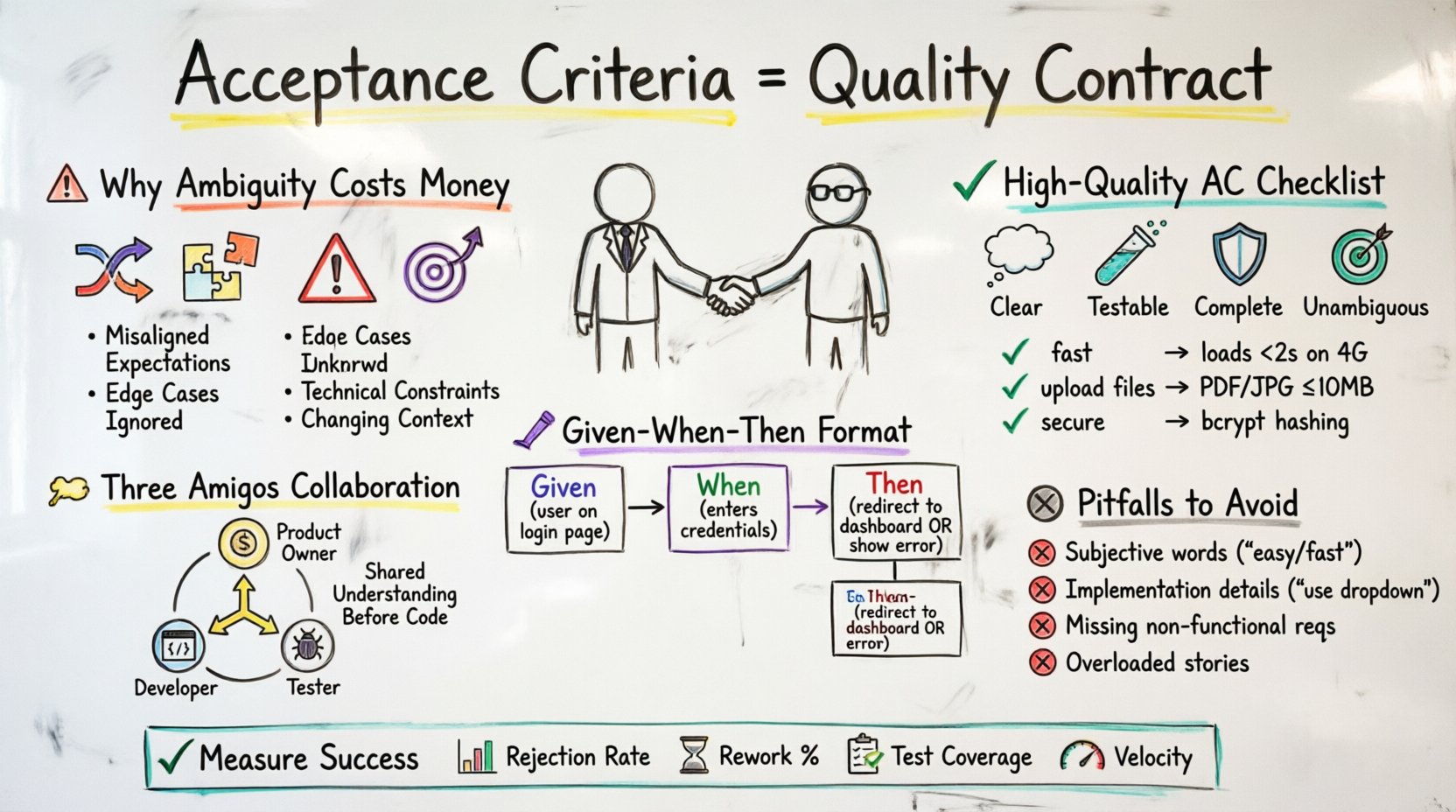

📉 Why Ambiguity Costs Money

Development rework is not simply a matter of fixing a bug; it is a drag on velocity and morale. When a developer builds a feature based on an incomplete understanding, the subsequent review reveals the gap. Fixing it requires unlearning the previous work and re-implementing the correct logic. This cycle consumes time that could have been spent on new features.

Consider the following factors that contribute to rework:

- Misaligned Expectations: The Product Owner envisions a specific workflow, but the description lacks detail.

- Edge Cases Ignored: The happy path is defined, but error handling and boundary conditions are omitted.

- Technical Constraints Unknown: The criteria do not account for performance limits or security requirements.

- Changing Context: Without clear criteria, scope creep occurs unnoticed during development.

By investing time upfront in clear criteria, teams reduce the likelihood of these issues. The goal is to shift the effort left in the lifecycle, where changes are cheaper and more impactful.

🏗️ The Anatomy of a High-Quality Acceptance Criterion

Not all acceptance criteria are created equal. Some are too broad, allowing for interpretation. Others are too specific, locking the team into a single implementation that may not be optimal. The sweet spot lies in defining what the system must do, without dictating how it must be built.

High-quality criteria should be:

- Clear: Written in plain language that everyone on the team understands.

- Testable: There must be a way to verify the condition is met.

- Complete: Covering all scenarios, including negative paths.

- Unambiguous: Using specific numbers and terms rather than subjective adjectives.

Below is a comparison of poor versus strong criteria to illustrate the difference.

| ❌ Vague Criterion | ✅ Precise Criterion |

|---|---|

| The system should be fast. | The page loads in under 2 seconds on a standard 4G connection. |

| Users can upload files. | Users can upload files up to 10MB in PDF or JPG format. |

| The search function works well. | Search returns results within 500ms and handles typos via fuzzy matching. |

| Ensure data is secure. | Passwords are hashed using bcrypt before storage. |

🔍 Techniques for Precision

To achieve the clarity required to prevent rework, teams should employ structured writing techniques. These methods force the writer to think through the logic before the code is written.

1. The Given-When-Then Format

Often referred to as Gherkin syntax, this format structures criteria into three distinct parts:

- Given: The initial context or state of the system.

- When: The action or event that occurs.

- Then: The observable outcome or result.

This structure is powerful because it maps directly to automated testing. If you can write the criterion in this format, you can often write the test case immediately. For example:

Given the user is on the login page,

When they enter a valid email and password,

Then they are redirected to the dashboard.

Conversely, a negative scenario would look like this:

Given the user is on the login page,

When they enter an incorrect password,

Then they see an error message and remain on the login page.

2. Business Rules and Constraints

Acceptance criteria often need to encode specific business rules. These are non-negotiable constraints imposed by the organization or legal requirements. Be explicit about these constraints.

- Financial Limits: Transactions cannot exceed $10,000 without manager approval.

- Compliance: User data must be retained for 7 years as per local regulation.

- Capacity: The system must support 1,000 concurrent users.

3. Edge Cases and Error Handling

Most rework happens when the system behaves unexpectedly. Developers often focus on the happy path. Criteria must explicitly address what happens when things go wrong.

- What happens if the internet connection drops during a submission?

- What happens if a database query times out?

- What happens if the input field receives special characters?

🤝 Collaboration and the Three Amigos

Writing acceptance criteria is rarely a solitary task. It requires input from the three key roles involved in the delivery of value: the Product Owner, the Developer, and the Tester. This practice is often called the “Three Amigos” meeting.

During this collaborative session, the team reviews the user story and drafts the criteria together. Each perspective adds necessary depth:

- Product Owner: Ensures the criteria reflect the business value and user needs.

- Developer: Identifies technical feasibility and potential architectural impacts.

- Tester: Focuses on edge cases, security, and how to verify the criteria.

This collaboration prevents the common trap of the Product Owner writing criteria that are technically impossible, or the Developer writing criteria that miss the business intent. It builds shared understanding before a single line of code is written.

🔄 Acceptance Criteria vs. Definition of Done

It is common to confuse Acceptance Criteria with the Definition of Done (DoD). While they are related, they serve different purposes. Understanding the distinction is crucial for accurate planning.

- Acceptance Criteria: Specific to a single User Story. It defines what makes that feature complete and valuable to the user.

- Definition of Done: Applies to all User Stories. It defines the quality standards for the entire increment (e.g., code reviewed, unit tests passed, deployed to staging).

If the DoD is not met, the story is not done, regardless of whether the acceptance criteria are met. If the acceptance criteria are not met, the story is not valuable, even if the DoD is satisfied.

🚫 Common Pitfalls to Avoid

Even experienced teams fall into traps that degrade the quality of their criteria. Awareness of these pitfalls is the first step toward mitigation.

1. Using Subjective Language

Words like “easy,” “fast,” “intuitive,” or “robust” are subjective. One person’s intuitive is another’s confusing. Replace these with measurable standards.

- ❌ The interface should be intuitive.

- ✅ Users can complete the checkout flow in three clicks.

2. Focusing on Implementation Details

Acceptance criteria should describe behavior, not implementation. If you specify the technology, you limit the developer’s ability to choose the best solution.

- ❌ The system must use a dropdown menu for selection.

- ✅ Users must select one option from a list of five.

3. Ignoring Non-Functional Requirements

Performance, security, and accessibility are often forgotten until the end of the sprint. Include them in the criteria if they are critical to the user story.

- ✅ The image gallery must support keyboard navigation.

- ✅ The API response time must not exceed 200ms.

4. Overloading a Single Story

If a user story requires too many complex acceptance criteria, it is likely too big. Break it down into smaller stories. Large stories are harder to estimate, harder to test, and harder to integrate.

📊 Measuring Success

How do you know if your acceptance criteria are working? You need metrics that reflect quality and efficiency. Track these indicators over time:

- Rejection Rate: How many stories are rejected during the sprint review due to missing criteria?

- Rework Percentage: What portion of the sprint was spent fixing issues that should have been caught by the criteria?

- Test Coverage: Do the acceptance criteria map directly to automated tests?

- Team Velocity: Does the team move faster once the criteria are clear?

Review these metrics in the Retrospective. If rework is high, analyze the criteria that failed. Did the team miss an edge case? Was the language ambiguous? Use this data to refine the process.

🛠️ Refining the Process Over Time

Writing acceptance criteria is a skill that improves with practice. No team gets it perfect on the first try. Continuous improvement is necessary.

- Review Past Stories: Look at stories from previous sprints. Which ones caused confusion? Rewrite the criteria for similar stories in the current backlog.

- Standardize Templates: Create a shared template for common types of stories (e.g., login, search, dashboard). This ensures consistency.

- Train the Team: Ensure all team members understand how to write and review criteria. Conduct workshops if necessary.

- Encourage Questions: Foster a culture where asking “What does this mean?” is encouraged, not discouraged.

❓ Frequently Asked Questions

Q: Can acceptance criteria change during development?

A: Yes, but it should be rare. If the criteria change significantly, the story may need to be re-estimated or split. Discuss any changes immediately with the team to avoid wasted effort.

Q: Who is responsible for writing the criteria?

A: Ideally, the Product Owner writes the first draft, but the whole team collaborates to refine them. The team owns the final version because they are the ones building and testing it.

Q: How many criteria should a story have?

A: There is no fixed number. It depends on the complexity. Typically, 3 to 7 criteria provide enough detail without being overwhelming. If you have more, consider splitting the story.

Q: What if a criterion cannot be tested?

A: If it cannot be tested, it cannot be verified. You must rewrite it to be observable. If you cannot measure it, you cannot know if it is done.

Q: Does this apply to non-software projects?

A: The principles apply to any project requiring clear requirements. The terminology may change, but the need for testable, unambiguous conditions remains.

🚀 Moving Forward

Implementing a rigorous approach to acceptance criteria is a journey. It requires discipline and a commitment to clarity. By defining the boundaries of work clearly, teams can focus on execution rather than clarification. This shift reduces friction, improves quality, and delivers value faster.

Start by reviewing your next sprint backlog. Pick one user story and rewrite its acceptance criteria using the techniques outlined above. Test the new process. Measure the difference. Over time, the reduction in rework will become evident, and the team will operate with greater confidence and flow.

Remember, the goal is not perfection, but continuous improvement. Every story is an opportunity to refine how you define value. Keep the focus on the user, keep the language precise, and keep the collaboration open.