Strategic planning is the backbone of organizational longevity. Yet, many teams stumble at the very first hurdle: the SWOT analysis. While the framework itself is decades old, the execution often lacks depth. A generic list of Strengths, Weaknesses, Opportunities, and Threats often becomes a static document that sits on a shelf rather than a living tool for decision-making.

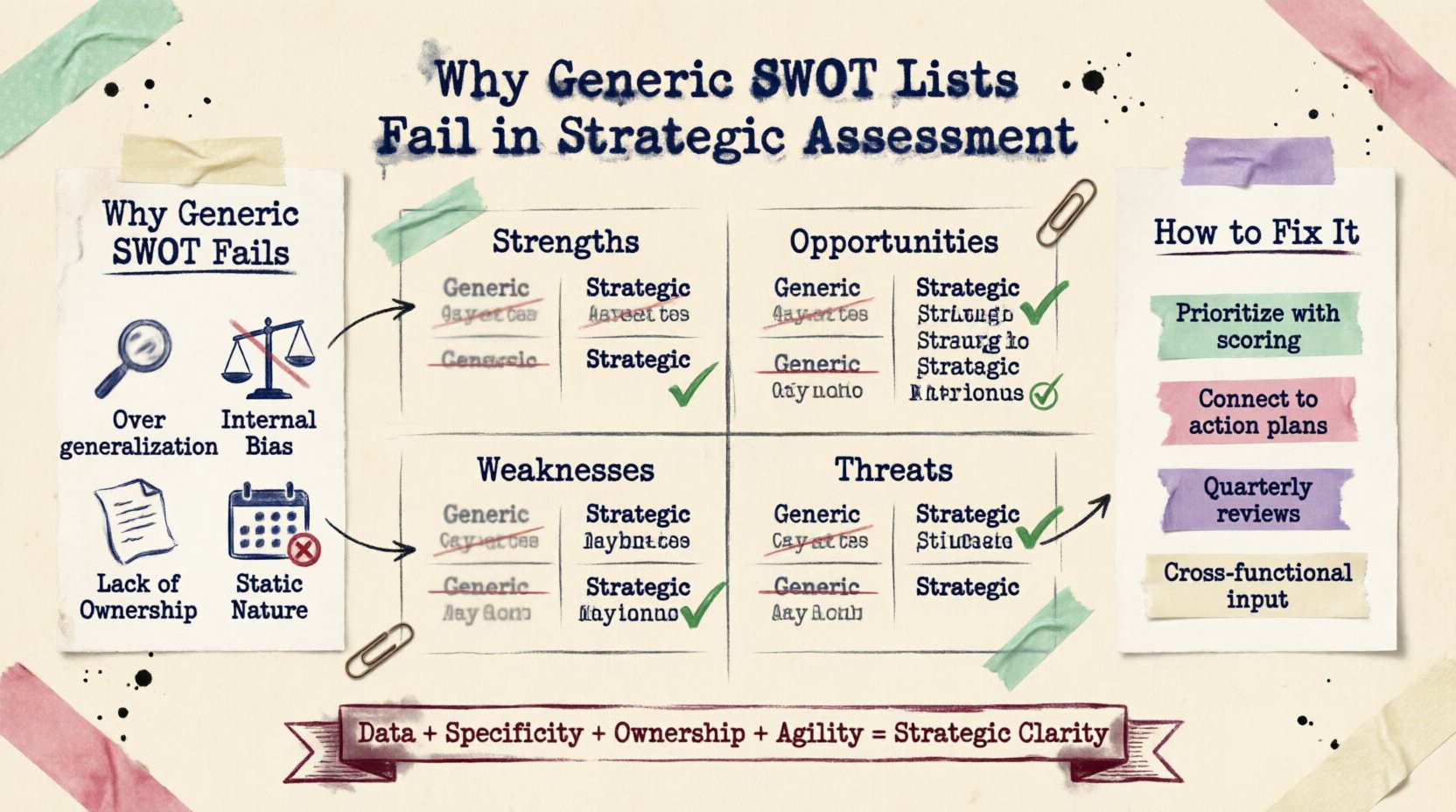

This article explores the structural and psychological reasons why superficial SWOT lists fail to drive real strategic assessment. We will examine the gap between identification and actionable insight, the dangers of cognitive bias, and how to shift from static checklists to dynamic strategic frameworks.

The Allure of Simplicity vs. Strategic Reality 🧩

Organizations love frameworks that appear simple. The SWOT matrix fits this criteria perfectly. It divides complex business environments into four quadrants. However, simplicity often masks complexity. When a team fills out a SWOT in a single workshop, the result is frequently a collection of broad statements rather than specific, measurable data points.

- Generic Strengths: “We have a strong brand” is not a strategy. It is a feeling.

- Generic Weaknesses: “Poor communication” is vague. Which department? What process?

- Generic Opportunities: “Market growth” is a macro trend, not a specific lever to pull.

- Generic Threats: “Competitor X” is a fact, but “Competitor X launching Product Y” is intelligence.

The failure lies in the lack of granularity. Strategic assessment requires specificity. A generic list provides comfort without clarity. It allows stakeholders to feel productive without engaging in the difficult work of critical analysis.

Deconstructing the Four Quadrants 🧱

To understand the failure, we must look at how each quadrant is typically misused. A robust assessment requires data, not just opinion.

Strengths: Internal Capabilities

True strengths are unique assets that provide a competitive advantage. They must be rare and valuable. Generic lists often confuse operational efficiency with strategic advantage. For example, having a large team is not a strength if the team is unskilled. A strategic strength must be defensible.

Weaknesses: Internal Gaps

Identifying weaknesses is often politically difficult. Teams may hide critical gaps to avoid blame. Consequently, the “Weakness” section becomes a list of minor inconveniences rather than existential risks. A strategic assessment must be ruthless in identifying areas where the organization is vulnerable.

Opportunities: External Potential

Opportunities must be actionable. A market trend is only an opportunity if the organization has the capacity to exploit it. Generic lists often list external trends without assessing internal readiness. This leads to strategy drift where the company chases every new trend without a clear path to profit.

Threats: External Risks

Threats are often treated as inevitabilities. A strategic assessment distinguishes between manageable risks and existential threats. Generic lists fail to assign probability or impact scores. Without risk quantification, mitigation planning becomes guesswork.

The Data Deficit in Static Lists 📊

One of the primary reasons generic SWOT lists fail is the absence of empirical data. Strategic assessment relies on evidence, not intuition. When a team relies on memory or anecdotal evidence during a brainstorming session, the output is biased.

Consider the following differences between a generic list and a data-driven assessment:

- Source of Information: Generic lists rely on workshop participants. Data-driven assessments rely on customer feedback, financial reports, and market research.

- Timeframe: Generic lists often reflect the present moment. Strategic assessments project forward based on historical trends.

- Validation: Generic lists are rarely validated. Data-driven assessments are tested against real-world outcomes.

Without data, the SWOT analysis becomes a popularity contest. The loudest voice in the room often dictates the contents of the matrix. This silences dissenting opinions that might be critical for a balanced view.

Common Failure Modes in Strategic Assessment 🛑

Understanding where the process breaks down is crucial for improvement. The table below outlines common pitfalls and their strategic consequences.

| Failure Mode | Description | Strategic Consequence |

|---|---|---|

| Overgeneralization | Using vague terms like “good service” or “high costs”. | Prevents targeted action plans and resource allocation. |

| Internal Bias | Overestimating strengths and underestimating threats. | Leads to overconfidence and missed warning signs. |

| Lack of Ownership | No one is assigned to address specific items. | The document becomes a historical artifact, not a plan. |

| Static Nature | Treated as a one-time annual exercise. | Strategy becomes obsolete as market conditions change. |

Cognitive Biases in Assessment 🧠

Human psychology plays a significant role in why strategic assessments fail. Even with the best intentions, cognitive biases distort the data.

- Confirmation Bias: Teams seek information that supports their existing beliefs. If leaders believe the strategy is working, they will list more strengths than weaknesses.

- Recency Bias: Recent events weigh too heavily. A recent product launch success might overshadow long-term structural weaknesses.

- Groupthink: In a workshop setting, individuals may conform to the group consensus to avoid conflict. This suppresses critical analysis of weaknesses.

Addressing these biases requires structured processes. Anonymous input, data review, and external facilitators can help mitigate these psychological traps.

From Static Lists to Dynamic Matrices 🔄

Success requires moving beyond the static SWOT list. The goal is to create a dynamic strategic assessment tool that evolves with the organization.

1. Prioritization is Key

Not all items in the SWOT are equal. A strategic assessment must prioritize. Use a scoring model to rank items based on impact and feasibility. Focus resources on high-impact opportunities and critical threats.

2. Connect to Action

Every item identified must link to an action plan. If a weakness is identified, there must be a remediation plan. If an opportunity is found, there must be an investment plan. This linkage ensures the assessment translates to execution.

3. Regular Review Cycles

Strategy is not a destination. Market conditions shift. Quarterly reviews of the SWOT analysis ensure the strategy remains relevant. This agility allows the organization to pivot before a threat becomes a crisis.

4. Cross-Functional Input

Strategy should not be confined to the executive suite. Input from sales, engineering, and customer support provides a 360-degree view. Different departments see different threats and opportunities.

Implementation and Accountability 💼

A strategic assessment is only as good as its implementation. Ownership is the missing link in many organizations.

- Assign Owners: Every strategic initiative must have a named owner.

- Set Timelines: Deadlines create urgency and focus.

- Measure Progress: Define KPIs to track the success of the strategic initiatives derived from the assessment.

- Communicate: Share the results with the broader organization to ensure alignment.

When accountability is clear, the generic list transforms into a roadmap. The document becomes a living contract between the leadership team and the organization.

Final Thoughts on Strategic Rigor 🎯

The failure of generic SWOT lists is not a failure of the framework itself, but a failure of rigor. Strategic assessment requires discipline, data, and honesty. It demands that leaders look beyond the surface and confront uncomfortable truths.

By addressing cognitive biases, prioritizing data over opinion, and linking analysis to action, organizations can transform a simple checklist into a powerful engine for growth. The goal is not just to fill out a matrix, but to understand the complex dynamics that drive business performance. When done correctly, the assessment provides clarity in a chaotic market.

Invest the time to do this work properly. The cost of a generic list is low, but the cost of a failed strategy is high. Build your assessment on solid ground, and your strategic decisions will follow.