Enterprise Architecture (EA) serves as the blueprint for organizational transformation. Without a clear understanding of where an organization stands, improvement becomes guesswork. Evaluating Enterprise Architecture Maturity Models provides the necessary lens to assess current capabilities, identify gaps, and plan for future growth. This guide explores the mechanics of maturity assessments, the frameworks available, and the steps required to derive actionable insights.

🔍 Understanding Maturity Models in EA

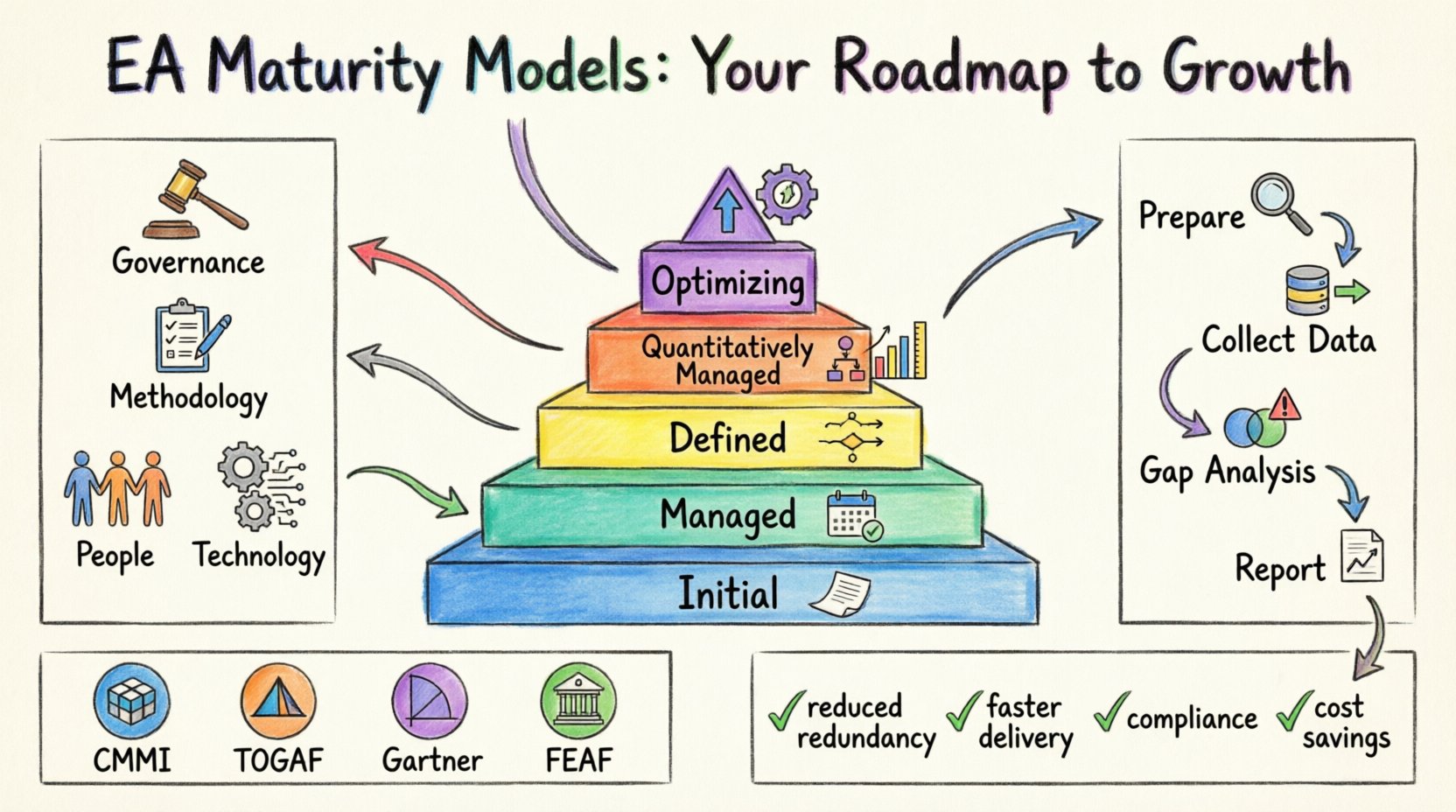

A maturity model is a structured set of practices that describes how an organization can move from an ad-hoc state to a managed and optimized state. In the context of Enterprise Architecture, these models do not just measure technology stacks. They measure governance, people, processes, and the alignment of IT with business goals.

When evaluating these models, it is essential to recognize that maturity is not a binary state. It is a spectrum. Organizations typically progress through defined levels. Moving up this spectrum usually results in better decision-making, reduced redundancy, and increased agility.

📊 The Five Levels of Maturity

Most standard models utilize a five-tier structure. Understanding the characteristics of each level helps in positioning your organization accurately.

- Level 1: Initial – Processes are ad-hoc and chaotic. Success depends on individual heroics rather than established systems. Documentation is scarce.

- Level 2: Managed – Basic project management processes exist. Requirements are tracked, and similar projects are managed. However, success is not consistent across the enterprise.

- Level 3: Defined – Processes are documented, standardized, and integrated into a coherent architecture. Training is provided, and the methodology is proactive.

- Level 4: Quantitatively Managed – Detailed metrics are collected. Performance is understood through statistical techniques. Risks are measured and controlled quantitatively.

- Level 5: Optimizing – Continuous improvement is driven by feedback loops and innovation. The architecture evolves proactively to meet future business needs.

🏛️ Common Frameworks and Approaches

Several frameworks exist to guide this evaluation. Each offers a unique perspective on what constitutes “good” architecture. Selecting the right framework depends on organizational culture and strategic goals.

📑 Framework Comparison

| Framework | Primary Focus | Best Used For |

|---|---|---|

| Capability Maturity Model Integration (CMMI) | Process Improvement | Organizations focused on engineering and development processes. |

| TOGAF Architecture Maturity Model | Enterprise Architecture Capability | Organizations seeking to standardize their EA practice and governance. |

| Gartner EA Maturity Model | Strategic Alignment | Leaders looking to align IT capabilities directly with business strategy. |

| FEAF (Federal Enterprise Architecture Framework) | Government and Public Sector | Entities requiring compliance with public sector standards. |

📏 Key Dimensions of Evaluation

A comprehensive assessment must look beyond the documentation. It must evaluate the living systems within the organization. The following dimensions provide a holistic view of EA health.

1. Governance and Strategy

- Does the architecture board meet regularly and with authority?

- Are there clear decision-making pathways for technology investments?

- Is the EA strategy aligned with the overall business roadmap?

- How are conflicts between business units and IT resolved?

2. Methodology and Standards

- Are there defined methods for creating architecture artifacts?

- Are naming conventions and data standards enforced?

- Is there a repository for storing architecture assets?

- How is the lifecycle of an application managed from design to retirement?

3. People and Organization

- What are the defined roles and responsibilities for architects?

- Is there a career path for enterprise architects?

- How is knowledge shared across the team?

- Do stakeholders understand the value of architecture?

4. Technology and Tools

- Is there an automated repository or catalog for architecture data?

- Are tools integrated with development pipelines?

- How is data quality maintained within the architecture models?

- Is the technology stack diverse or overly reliant on single vendors?

🚀 The Evaluation Process

Conducting a maturity assessment requires a structured approach. Rushing this process leads to inaccurate data and poor recommendations. The following steps outline a robust methodology.

Step 1: Preparation and Scoping

Define the boundaries of the assessment. Determine which business units or technology domains will be included. Secure executive sponsorship to ensure access to key personnel. Select the maturity model that best fits the organizational context.

Step 2: Data Collection

Gather evidence to support the assessment. This involves a mix of qualitative and quantitative methods.

- Interviews: Conduct structured interviews with architects, developers, and business leaders.

- Surveys: Distribute questionnaires to gather broad sentiment and self-reported metrics.

- Artifact Review: Analyze existing documentation, roadmaps, and standards.

- Observation: Attend architecture review meetings to observe decision-making in real-time.

Step 3: Gap Analysis

Compare the current state against the target maturity level. Identify specific gaps in processes, skills, or tools. Categorize these gaps by severity and impact. Distinguish between “must-have” fixes and “nice-to-have” improvements.

Step 4: Reporting and Validation

Compile findings into a clear report. Present the results to stakeholders for validation. Ensure that the findings reflect the reality on the ground. Avoid bias by cross-referencing interview data with artifact reviews.

🧩 Interpreting the Results

Numbers alone do not tell the full story. Interpreting the results requires context. A score of “Level 3” might mean different things in different organizations depending on their size and complexity.

📉 Identifying Bottlenecks

Look for dimensions where the score is significantly lower than others. If Governance is high but Technology is low, the organization may have strong rules but lack the tools to enforce them. If Technology is high but People are low, the organization may have great tools but no one to manage them.

📈 Tracking Progress Over Time

Maturity is not a one-time event. It requires continuous monitoring. Establish a baseline score and schedule follow-up assessments annually or bi-annually. Use key performance indicators to track improvements in specific areas.

⚠️ Common Challenges in Assessment

Even with a solid plan, obstacles can arise. Being aware of these challenges helps in mitigating risks.

- Political Bias: Stakeholders may inflate scores to look good. Mitigate this by using objective evidence.

- Siloed Information: Data may be trapped in separate departments. Ensure cross-functional access during data collection.

- Resistance to Change: Teams may fear that assessment leads to increased bureaucracy. Emphasize that the goal is efficiency, not control.

- Resource Constraints: Assessments take time and effort. Ensure dedicated resources are allocated to the task.

🛣️ Building the Improvement Roadmap

Once the assessment is complete, the focus shifts to action. A roadmap translates findings into a plan.

Short-Term Wins

Identify low-hanging fruit. These are improvements that require minimal investment but deliver visible value. Examples include standardizing naming conventions or establishing a regular architecture review cadence.

Medium-Term Initiatives

Address structural gaps. This may involve hiring specialized talent, implementing new processes, or acquiring necessary tools. Focus on stabilizing the core EA functions.

Long-Term Strategy

Align with business evolution. Plan for automation, advanced analytics, and deeper integration with business strategy. The goal is to move from a reactive posture to a proactive one.

🔑 Metrics for Success

How do you know the maturity model is working? You need measurable outcomes.

- Reduction in Application Redundancy: Fewer duplicate systems across the enterprise.

- Decreased Time to Market: Faster delivery of new capabilities due to better alignment.

- Improved Compliance: Fewer audit findings related to IT governance.

- Higher Stakeholder Satisfaction: Positive feedback from business leaders regarding IT support.

- Cost Optimization: Reduced spend on maintenance of legacy systems.

🤝 The Role of Stakeholders

EA is a collaborative effort. The success of maturity evaluation depends on engagement.

- Chief Information Officer (CIO): Provides strategic direction and resources.

- Chief Technology Officer (CTO): Ensures technical feasibility and standards.

- Business Unit Leaders: Validate that architecture supports business goals.

- Enterprise Architects: Execute the assessment and maintain the models.

📝 Final Thoughts on Continuous Improvement

Evaluating Enterprise Architecture Maturity Models is not about achieving a perfect score. It is about understanding the current state and moving forward with intention. The landscape of technology changes rapidly. A mature architecture practice is one that adapts to these changes without losing stability.

By systematically assessing capabilities, organizations can build a resilient foundation. This foundation supports innovation while managing risk. The journey requires patience and commitment, but the return on investment is significant.

Start with a clear definition of what maturity means for your organization. Choose the right tools and frameworks. Engage your people. Measure your progress. And keep iterating. This is the path to a robust Enterprise Architecture practice.

❓ Frequently Asked Questions

How often should we conduct a maturity assessment?

Annual assessments are common. However, significant organizational changes, such as mergers or major digital transformations, may warrant an immediate review.

Can we use multiple maturity models?

Yes. Different departments may benefit from different models. For example, development teams might use CMMI while the executive team uses a strategic alignment model.

What is the biggest mistake in EA assessment?

Focusing solely on documentation. True maturity lies in behavior and decision-making, not just the existence of papers or diagrams.